Local filename " _night - light_Africa_adjusted. Local w_f "C:\\Users\\matteo\\Desktop\\Conflict and social cohesion\\Data\\Night-lights\\DMSP\\DMSP intercalibration\" This code computes the coefficients for inter-calibrating night-light data across DMSP satellites

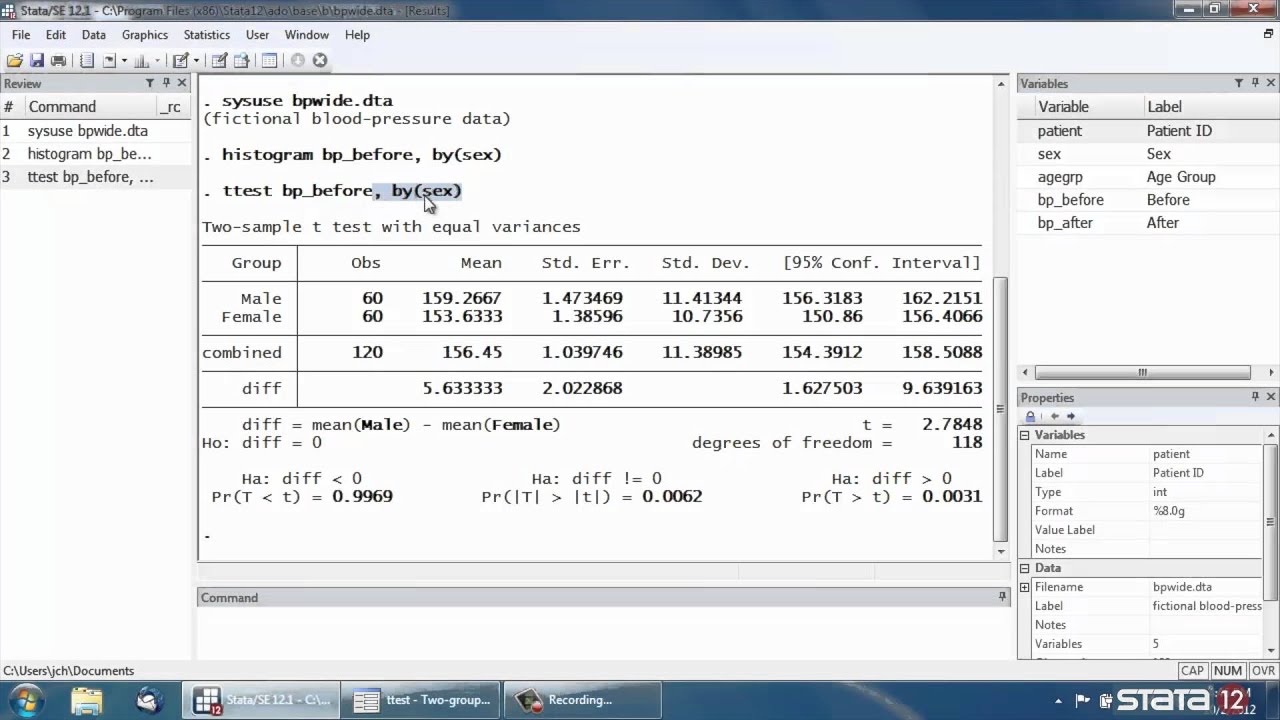

Note: 1.dum79#5.c_lable omitted because of collinearityĭum79 |. probit strat dum79 teamtreatment ib1.c_lable dum79# o1.c_lable

probit strat dum79 teamtreatment ib1.c_lable dum79# i(2 3 4 5).c_lable I expected the same result, but the second command omitted the 5th dummy variable. The authors argue their command is easier to use than others, some of which apparently don’t work anymore.So I run two Probit regressions with different commands, one is with i(2 3 4 5).c_lable and the other is with o1.c_lable. The major issue for all of these commands appears to be the source dataset. Turns out it is tough to process thousands of observations, while at the same time geocoding addresses (as opposed to using latitude and longitude) and getting back both distance and travel time. Most of us need the latter for our research projects. Be careful of addresses with a comma in the street address (e.g., 123 Elm St, #1), I had to pull those out to get the address to geocode.īe sure to include the country this is easy to forget when using addresses within a single country.Here are some hints for using the command: You can process 15,000 addresses per month for free, or pay for additional access (e.g., $49/month for 100K).Reduce the dataset to the bare minimum number of variables and avoid Stata IC.The command must create a huge number of temporary variables, because I couldn’t get it to run in IC when I ran it in my IC version by mistake. You may have to partition the dataset into chunks and process them separately, see my code below.Even in SE on my souped up desktop, I was getting a weird Stata memory error ( no room to add more variables because of width Width refers to the number of bytes required to store a single observation it is the sum of the widths of the individual variables.

The maximum width allowed is 1,048,576 bytes. It is really slow, takes about 3-5 minutes to process 500 observations, and I have Google Fiber 100 mbps internet.Check the first few slices of the dataset to make sure the geocoding is working, otherwise you will end up wasting a lot of time rerunning observations.I managed to get it to work by processing only 500 cases at a time. I corresponded with a tech at, who said calling their database via the API should be almost instantaneous. So it must be how the georoute command is coded in Stata. The timer option did not speed up the process. Nor did the speed differ between using a free account and a paid account.Background: Patients with limited English proficiency experience disparities in health care access, quality, costs, and outcomes. Providing qualified medical interpreting services (MIS) in the health care setting can reduce these disparities. Unfortunately, health organizations face logistical and financial difficulties in meeting the need for qualified medical interpreters. Introduction: This descriptive review evaluated travel, time, and cost savings associated with video interpreting services compared to traditional in-person services. For example, Iowa City will be paired up with every city in the distance.dta file as will Boston, Houston and Chicago. Materials and Methods: We conducted a retrospective review of all inpatient and outpatient medical interpreting encounters at a large academic hospital delivered through video and in person between 20. This command will create all possible pairs of the cities in the two files. Outcome measures included interpreter travel distance, time, and cost for in-person encounters and savings associated with avoided travel for services provided through video. Results: We reviewed 281,701 interpreting encounters, including 249,357 in person and 32,344 by video. Video encounters occurred both for on-site and off-site visits.

0 Comments

Leave a Reply. |

RSS Feed

RSS Feed